In modern manufacturing, the bedrock of operational excellence is robust, deterministic scheduling—where every batch, every machine, and every resource is accounted for in meticulous, hour-by-hour detail. But even with advanced planning tools, the future remains uncertain: How will costs shift if we run ten extra batches on the second shift? What if we defer Saturday’s load to Monday—will capacity be exceeded or resource bottlenecks emerge?

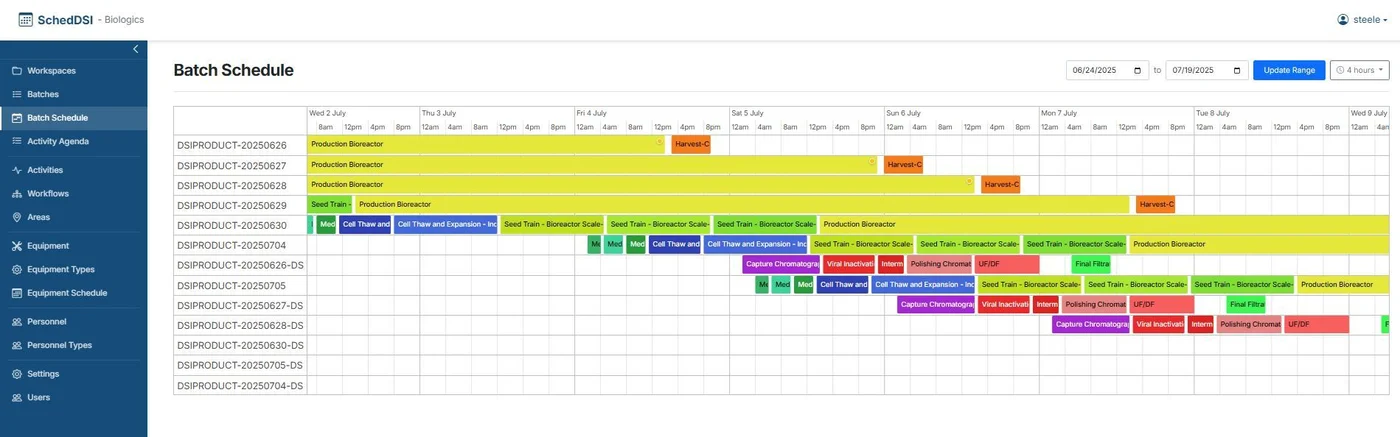

Enter SchedDSI—a powerful, intuitive, and highly configurable platform for finite and production scheduling. Designed for both simplicity via drag-and-drop interfaces and depth with embedded Python scripting for complex constraints, SchedDSI delivers real-time visibility into workflow, resource modeling, dependencies, and batch logic. But what truly elevates the platform is its integration of a generative Large Language Model layered atop the scheduling engine.

This LLM does not replace the deterministic core. Instead, it augments it. By tapping into the tools layer, the LLM becomes an interactive assistant: users can ask what the material cost will be if they run ten batches on second shift, or whether moving Saturday’s load to Monday will create equipment bottlenecks next week. The model translates natural-language questions into operations on live schedule data and returns precise, actionable insights. You get the best of both worlds: the reliability of finite scheduling and the flexibility of intuitive what-if scenario modeling.

With the right tooling, scheduling can be enhanced and augmented.

Turning Natural-Language What-Ifs into Deterministic, Auditable Answers

Most AI-enabled scheduling products stop at chat. SchedDSI goes further with a dedicated tools layer that connects natural-language prompts to precise database queries and domain-specific logic—safe, repeatable, and fast. This is how planners can ask free-form questions like “What will the material cost be if we run 10 batches on second shift?” or “If we push Saturday’s batches to Monday, will we bottleneck next week?” and get answers grounded in live scheduling data, not guesses.

At its core, the tools layer is a lightweight Flask service that exposes two primary behaviors.

Load and index context: - Convert workspace metadata such as areas, workflows, activities, and batches into concise, structured prompts. - Embed those prompts and build a vector index so the system can instantly retrieve the most relevant facts for any question.

Query with live data and tools: - Parse the user’s natural-language prompt to detect intent. - Call purpose-built tools that query read-only operational data. - Augment the LLM context with both retrieved prompt context and real-time database results. - Synthesize an answer that prioritizes current numbers over static context.

This structure keeps the LLM honest: it reasons with facts instead of hallucinating around them.

Why prompts and database access matter

Prompts provide map-level knowledge: which areas exist, what workflows look like, and how batches relate to activities. Database access provides the ground truth: statuses, availability, alerts, activity durations, and variable values. Together they produce answers that are explanatory and numerically correct.

How a question flows through the system

1. The user asks a question in plain English. 2. Intent is detected and matched to the correct tool. 3. Tool calls fetch authoritative live data. 4. Results are merged into context with relevant retrieved workflow facts. 5. The model returns an answer that explains the operational consequences.

Common planner tasks supported by this model include: - querying activities by area - querying workflows by area - viewing batches by status and area - checking equipment availability - checking personnel availability - drilling into batch activity details - summarizing workspace status - surfacing batch alerts by severity

Why this beats a chatbot on a database

Traditional chat-over-data systems force the model to synthesize SQL from scratch. That is brittle, risky, and difficult to maintain. SchedDSI instead keeps the SQL and business rules inside tested tools and uses the language model for reasoning, tradeoffs, and scenario exploration.

The result is a system with deterministic provenance and narrative clarity. Planners get answers with a traceable operational basis and an explanation they can act on.

In practice, that means questions like these become everyday operations tools: - What will the material cost be if we run 10 batches on second shift? - If we delay Saturday’s batches to Monday, will it bottleneck next week? - Which batches in Purification currently have critical alerts?

The bottom line is simple: the tools layer is where deterministic scheduling and an LLM copilot meet. Prompts make the model smart about factory structure. Read-only data calls make it correct about factory reality. Together, they turn what-if analysis into an everyday planning superpower.